I thought I would give you a quick rundown on what I am using in my lab.

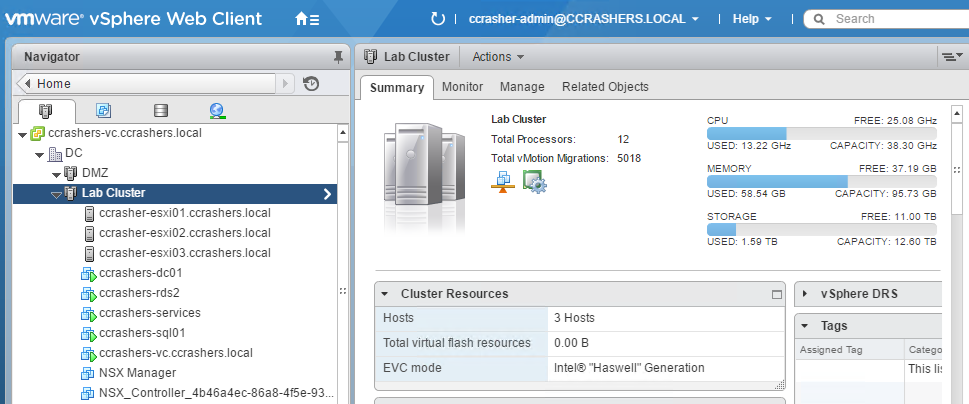

Compute

3 x Dell T20

Each with:

- Intel Xeon E3-1225 3.2Ghz Quad Core processors

- 32GB Ram

- 8GB USB Drive for ESXi installation

- 2TB SATA Drive

I purchased these from Servers Plus when they had a £100 cash back offer on, keep your eyes out for some bargains not just for Dell but all vendors.

To achieve what I wanted I added some hardware to the hosts, these added extras gave me the facility to split my networking requirements and also to run vSAN.

Added Extras:

- Lycom DT-129, PCIe 3.0 x4 3.3V5A Host Adapter for PCIe-NVMe M.2 110mm SSD (Note: unsupported with my version of vSphere)

- Samsung SM951 128GB M.2 PCIe NVMe High Performance SSD (Note: unsupported with my version of vSphere)

- Intel Quad 82580EB Gigabit Ethernet Controller

vCenter / ESXi

I have deployed the VCSA version 6U2a (Build 4541948)

Each host is running version ESXi 6 updated to build 4600944

Storage

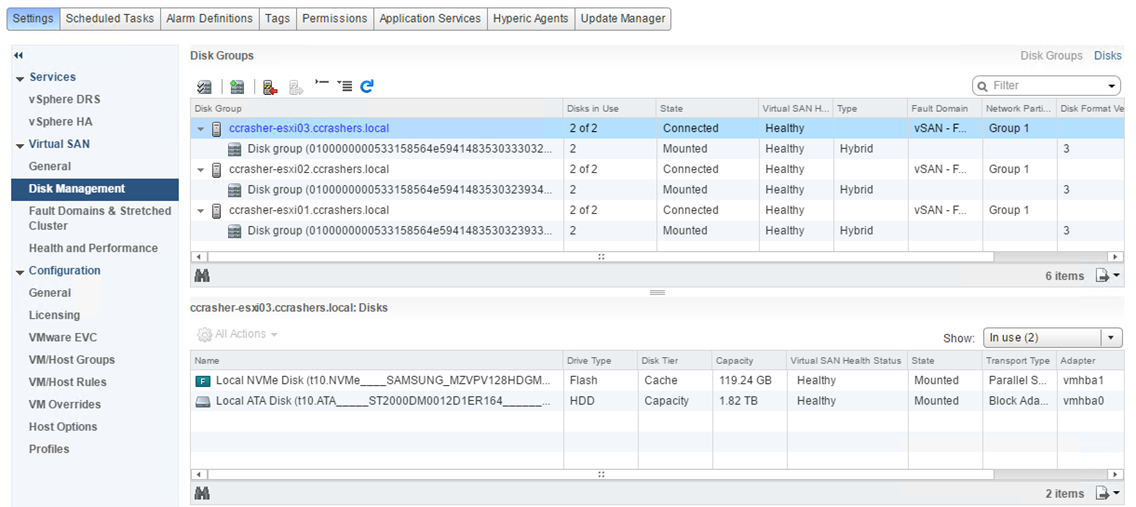

For my infrastructure VMs, I have deployed vSAN using the local 2TB HDD in each of the hosts and also the NVMe SSD.

I must say that I have been very impressed with the performance of vSAN.

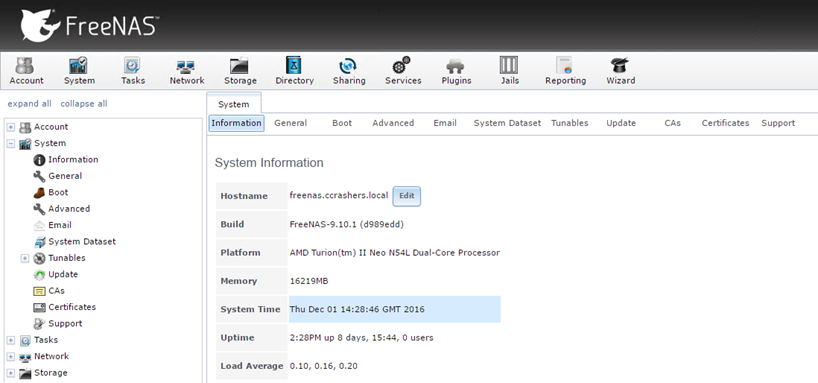

I also have an HP Proliant Microserver which I have used to deploy iSCSI datastores.

The details of the HP server are:

- AMD Turion(tm) II Neo N54L Dual-Core Processor

- 16GB RAM

- X4 1TB HDD

- X1 1TB SSD

- FreeNAS version 9.10.1

Networking

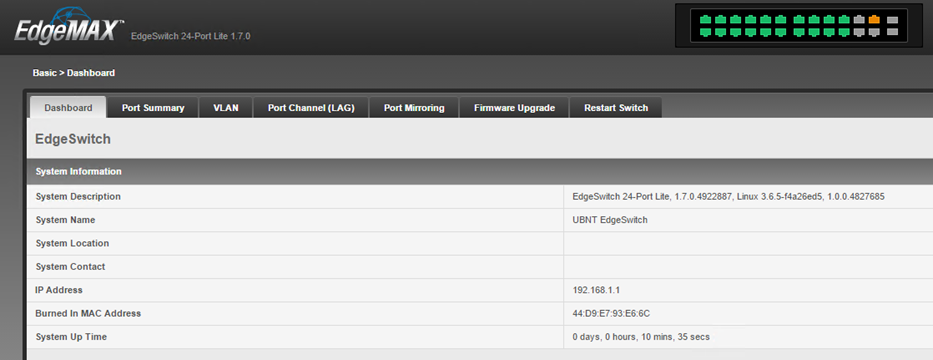

I have invested in an Ubiquiti EdgeSwitch ES-24-LITE Switch.

Now I like this switch, and I like Ubiquiti but I have had some issues with Jumbo Frames now working. After some back and forth with Tech Support a new firmware version has been released which I will give a try to and see if Jumbo Frames will start to work.

For my Host networking I am using 2 vDS, one for the management/infrastructure port groups and another for all of my tenant port groups, I am going to be running a lot of tests within vRA/vRO and I want to keep them separate.